by Doug Coulter » Wed Jul 02, 2014 12:58 pm

by Doug Coulter » Wed Jul 02, 2014 12:58 pm

Knowing Jerry, and that set of optics, I'd bet quite a bit we'll be seeing some great pix soon, even though he lives in a place, errrm, not that well suited to looking at the sky if I read my weather maps right. That might be why he made it so adjustable - he might take it to a mountain somewhere with clear air and low sky light pollution...I know that's why I'd have done that.

The scope has a rather long focal length, eg, it's easy to have too much magnification for it - it's not a wide field of view unless he rips out the current eyepiece holder and puts in a larger diameter one...not a big deal from him, but it frustrated me when I owned that scope.

Someday, I hope to convince him to build "Coulter's stochastic smoother" for it. I'll put details of that on another thread, it's why I got the thing myself, but that project petered out here - I was busy also running a business at the time. But the main idea is simple. Use a really fast sample rate (about 2-300hz is right for the turbulence cells in the jet stream), and simply toss out the frames that have smear, adding the rest together instead. A more-advanced version might deconvolve simple smears where possible, and there's a point source guide star in the FOV. But looking at, say, the moon or Jupiter at high magnification with that was like looking at something at the bottom of a swimming pool just after someone cannon-balled into it - multiple smears inside the eye's averaging time = the FPS required for movies has nothing to do with this problem. I did bother to do the math and a few hundred hz (or the inverse for a shutter speed) is about what you need to get above Nyquist for the air effects. This might require a multichannel plate with high quantum efficiency, close-coupled to the camera sensor. And it'd have lousy dynamic range, so you put a third layer on the front (that loses you 2/3 of the photons, so now you really need that light amplifier) - which is a B/W LCD screen - you make pixels in it dark to keep from "clipping" the bright spots in the image, and that data can then be combined with the sensor data to give you more dynamic range than the sensor alone has...This also has the advantage for deep space work of only keeping pix that have a photon from the object of interest, but you then have to be careful you don't only see what you expect. At least, you get rid of hours of sky light pollution per kept frame - only keep the ones with real data in them.

I've been trying to sell this idea to astronomers and guys at NASA for decades, but no. Back then - long exposures are it, we just live with the sky background and smear. No need for that, or a laser fake star if you know your DSP - which was, admittedly, a lot more expensive then than now.

I've also agitated with the two guys I know who have access to fabs to make this fancy 3 part sensor. No takers yet...but it would be a huge breakthrough if someone bothered to do it all in one "chip" as you could have all the pixels aligned perfectly between the layers.

Posting as just me, not as the forum owner. Everything I say is "in my opinion" and YMMV -- which should go for everyone without saying.

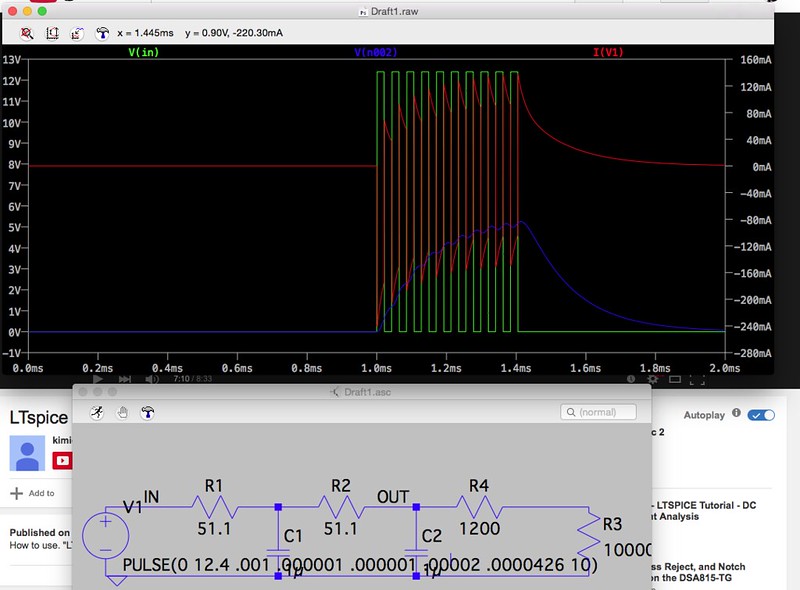

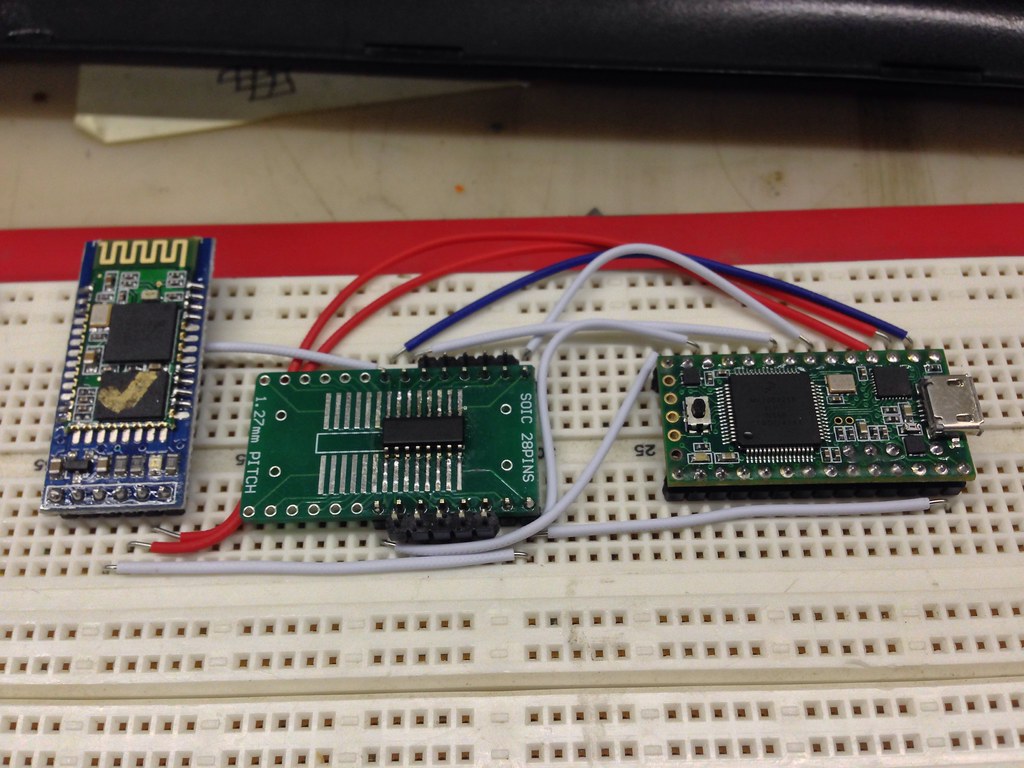

Scope mount control prototype by macona, on Flickr

Scope mount control prototype by macona, on Flickr Parts powdered and ready for baking. by macona, on Flickr

Parts powdered and ready for baking. by macona, on Flickr Parts powdered and ready for baking. by macona, on Flickr

Parts powdered and ready for baking. by macona, on Flickr IMG_8909 by macona, on Flickr

IMG_8909 by macona, on Flickr

You'll post some stunning time exposures soon after you get the controls calibrated, won't you?

You'll post some stunning time exposures soon after you get the controls calibrated, won't you?